|

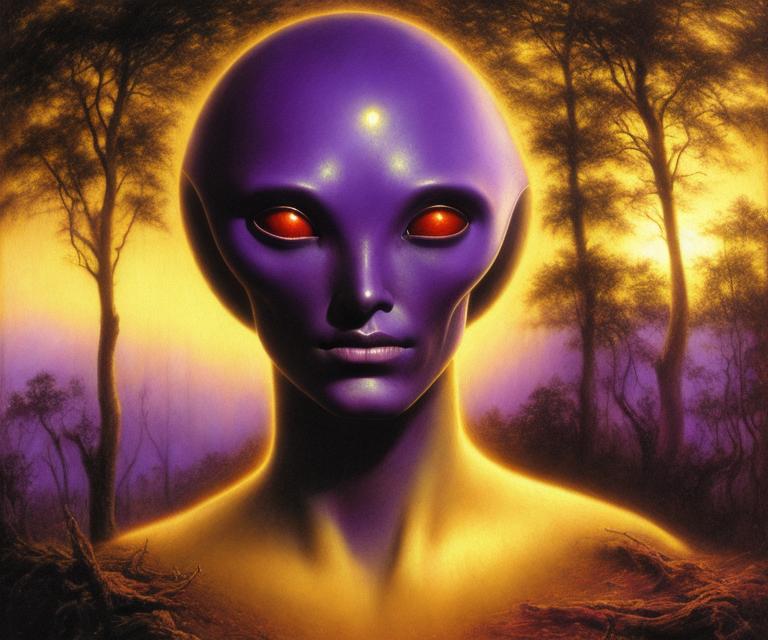

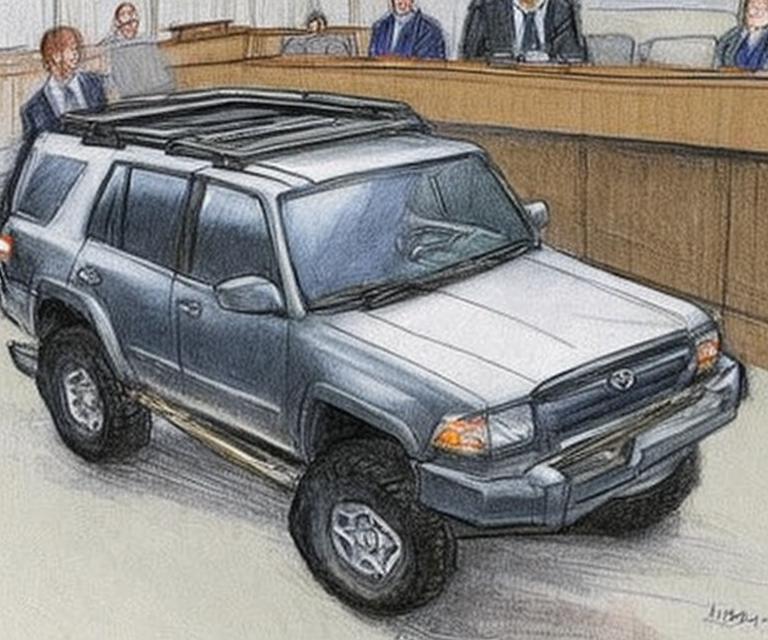

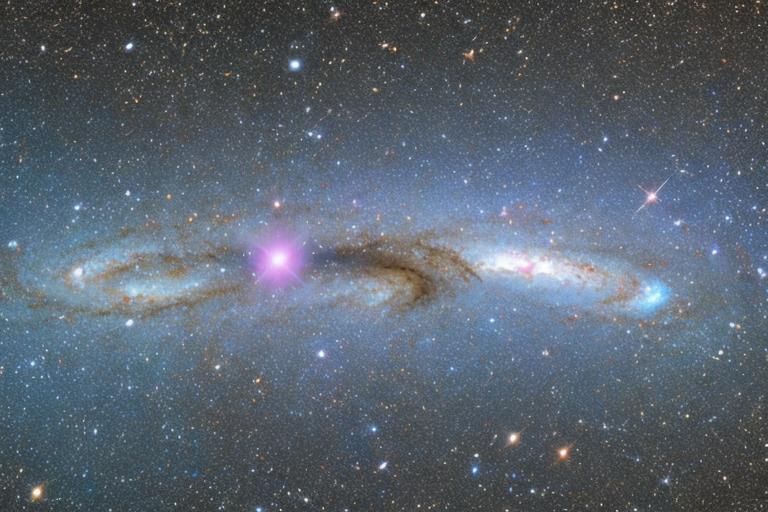

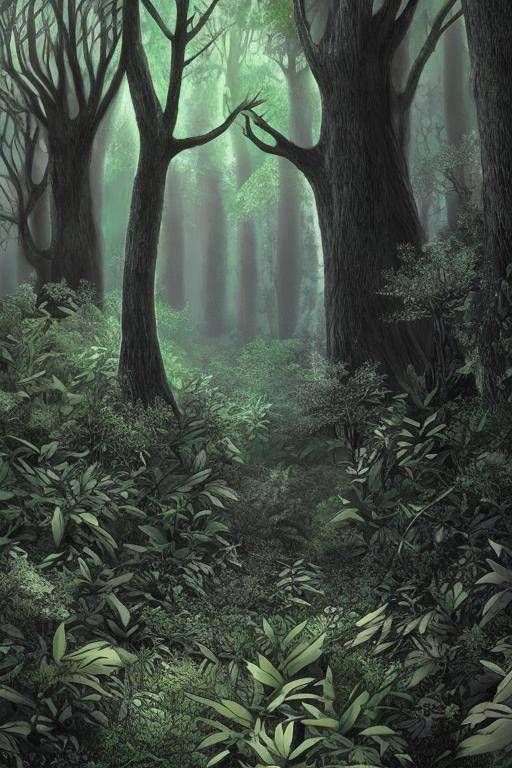

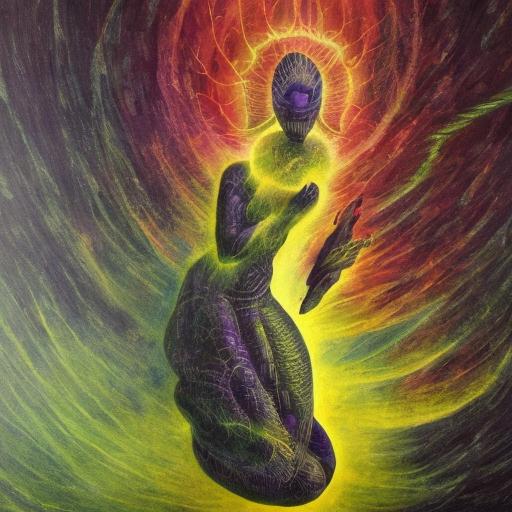

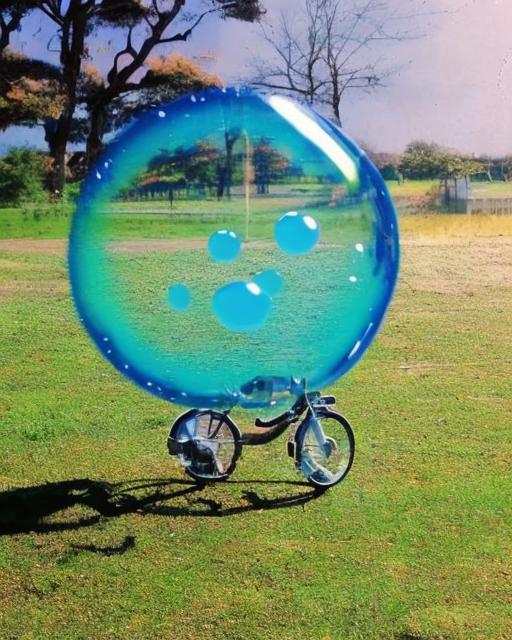

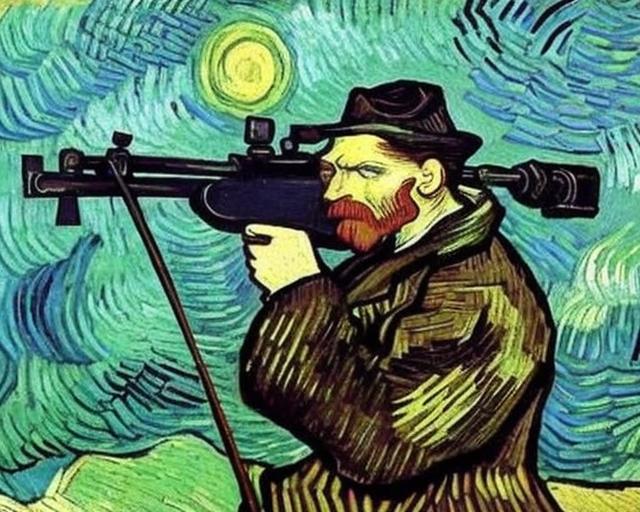

Pandemic Diary -- 1 June 2023Warning: a wall of text, and then lots and lots of pictures!Let us all appreciate Red Dwarf predicting the actual year 2023 (video). Kryten does his reinforcement learning with a holo-mallet. At least four incredible things happened this week, and I'm still processing all of them, and I need to triage my time pretty tightly to avoid decision paralysis and it's been years since I've had that kind of challenge. What a week. What a wonderful week. God, it's good to be alive. Happy June, everybody. First, congratulations to (fellow Petals Projecters!) Tim Dettmers, Artidoro Pagnoni, Ari Holtzman, and Luke Zettlemoyer over at the University of Washington. Their qLoRA paper is a clever (and super-cheap!) approach to a difficult problem. I've been a big fan of fine-tuning the Fun Size language models for a while -- look, you make do with what you have -- but doing it to even the sleekest shoggoth is expensive and takes forever -- well, until it isn't and doesn't. Great googly moogly! I... have a lot to say about this (it's incredible) but honestly this is one of those things that sounds lunatic unless you just see it in action (what's the big deal? a more efficient way to do low-rank adapter tuning?), and I still haven't really stopped jumping up and down with excitement, so stay tuned. We're going to see some things, you're going to hold them in your hand. If you want a peek, go check out Guanaco-33B -- there's a simple demo you can just run on HuggingFace -- but it gets a lot better than that. That's just a "look what you can do, broadly, with only 33 billion parameters!" demo. When you have boutique data and a specific use case to sharpen your model toward? Mmmmm, you can make power tools, power tools with natural language interface that can outperform any general-purpose model. It's so good. All the noises that VC tools are making in the media about "AI existential risk, ooga-booga, be afraid!" currently are not unrelated to these things, by the way, if it wasn't completely obvious to you. We live in interesting times. The second marvelous thing that happened in this marvelous week -- and this is even geekier, I'm sorry -- is that ggml, the C++ library behind that llama.cpp thing, got CUDA support (and steering vectors like "no toast!", incidentally, which is also cool.) Not in the official release yet, but there are pull requests with it fully implemented, so we're off to the races. This is another thing that maybe sounds minor and weird and mundane for something 'marvelous', but there are implications. I'm already a fan of ggml. Most of what I've been doing the last year is just replicating models described by somebody else -- I make improvements, sure: improving performance, tying them together with other models or programs to make little tools, or training the models to burnished brilliance using a few fancy tricks I've picked up or (re)invented along the way, then I move to the next thing. But ggml and model quantization and the tools around it makes a lot of skunkworks stuff, at or near state-of-the-art, more accessible, and there's the potential of finding actually useful advances, new things, poking around there. I've used it to play around with samplers and quantitative benchmarks close-up for LLMs the past six weeks, because there are a lot of interesting ways to produce text with a computer beyond beam search and top_p/top_k, and benchmarks for strong LLMs are becoming all terrible and meaningless (perplexity is seldom a measure of anything you care about at larger scales or after tuning, and bridging the gap between qualitative and quantitative metrics without throwing your hands up and asking another language model to maybe succeed at it would be nice). You do fast iterative tests or long experiments on massive numbers of outputs of actually capable models and get somewhere with tools like that, without a massive compute cluster; a laptop will do. And if I get something useful or interesting, it's just a pull request or a few e-mails and those that know, will know, and that's the way it should be. A nice open ecosystem where useful things are still getting done. I'm just here for peace, liberty, freedom, and the rapid proliferation of artificial intelligence; unfortunately, in the world we're building right now, I think we will not have the first three without the fourth. But that isn't quite the reason this is kind of big -- one of the things slowing native AI-powered consumer apps is building anything on top of Python makes it a pain in the butt to release. (There are various solutions of course, but they're all a pain in the butt.) Python dependency hell is one of the lower tiers, I'm pretty sure; you want to use virtual environments to avoid your Python-based deep learning systems breaking some point down the line, and that kind of process is horrible for anybody that isn't accustomed to command-line terminals (and many people that are). With a light self-contained low/no-dependency C++ version, you can compile it, download whatever model to pair with the thing, and off you go. That's something people that aren't terminal terminal dorks might actually use. Still not really ideal (what? you have to compile it or trust some binary?!) but a hell of a lot more user-friendly than "here's hoping you notice you didn't install pip in your new conda environment before you run the setup script and install the pippable stuff over whatever's in your base environment, while the conda stuff installs in the new place, so now neither environment works, merry Christmas". And yes, this means that the ideal deep learning setup has at least six different versions of Python, ten of numpy, six of torch, three of TensorFlow, and four of HuggingFace transformers installed. This is normal. This is fine. But of course now with CUDA support you don't have to sacrifice GPU acceleration on ggml; if that's available your models will cook. The ability to build things that work cross-platform (on edge devices OR powerful servers OR web browsers) with relatively easy installation on one source code base with hardware acceleration where available should help a lot with app builders. Those are two niche and technical things, but they are big in themselves and their implications are maybe much bigger. Maybe in a month something else that cannot be explained in a single sentence without sounding like a Martian happens that changes the landscape, who knows? I promise the next two incredible things are more relatable to normal people not living inside their own heads. You have to understand, things are perfectly ordinary over here. The third wonderful thing that happened in Wonderland this week is that we had a birthday party. Actual real world things happening in real life with real people! My sister and my father both arrived to this world on the same calendar day, and I am blessed to have them both, so we do the double birthday bash each year. It was absolutely gorgeous outside -- we spent most of the day in the backyard -- and I felt the sun and we had a cookout. The nieces and the brother-in-law and the dogs were there. Presents and presence were exchanged. We laughed a lot. It was beautiful. No matter where we end up riding with Phaeton in his hijacked chariot -- whether we crash and burn, or bring the dawn -- that's just where we're going, but this stuff along the way is what's going to be matter; it's fleeting and irreplaceable. I don't exist to make sand draw memes or write sonnets for me (although I am enjoying doing that tremendously and it's probably necessary for me to do!) The fourth thing is maybe more relatable too: those pretty pictures! Puzzle Box is still doing its thing; I don't have any reason to stop it, it insists on further practice and it keeps spitting out better and better stuff so I let it continue. Has to be around 2000 GPU-hours now? Lucky epoch 13 dropped a couple days ago, anyway, and is it ever an improvement; while there are a few special less-obvious things that I do to help this work so well, most of it's just good data and quality machine practice. If you can put together a good data set, tilted to whatever your own tastes are, and you can get at least an RTX 3090 worth of compute (there are some free-tier cloud compute options!), you can make one like this, too. Make sure your captioning game is on point, however you do it (manually, with machine captions, or a mix -- I'm using a mix): using accurate captions improves the model's accuracy to conditioning tremendously (i.e. you're a lot more likely to get exactly what you ask for.) I've always prioritized accuracy up with good aesthetics; it's wonderfully satisfying to see something strange work with no effort beyond some careful choreographing at the start and all those quintillions of FLOPs of training. There are plenty of freely distributable high quality datasets out there -- I've been collecting and trading datasets with other people and/or robots for a long time, and some of the public domain/CC-redistributable stuff will be going up in torrents. How do I know when it's "done"? Who knows? I've still never seen a deep neural network that wasn't undertrained; more likely I'll just train Puzzle Box v2 until there's a better architecture for training a v3 on top. Compute cost is trivial; a single RTX 3090 draws 350W so ~8 kW-hr/day, electricity is cheap (I, blessedly, live with abundant and super-cheap electricity.) It's like a Sally Struthers commercial ("for less than 70 cents a day, you can train your own paintbot!") At some point it'll just sort of oscillate around whatever optimum it's found, but we're still making headway so I don't know when that will be. Wait for the loss to minimize? That happened three epochs ago (at least that's the current minimum) but epoch 13 is qualitatively significantly better than epoch 10 and nothing appears to be overfitting and sample diversity is tremendous, so. The thing to remember is that validation accuracy, while nice, is optional. The loss number matters when the product isn't quality yet; right now I could compute FID against some standard dataset, but fidelity to COCO is not what I'm optimizing for. I am optimizing for light, joy, and happiness. How do you optimize for that, you wonder? Well, you steer the boat. If the boat doesn't head where you want, you steer it where you want to go; you show the model more of what you like until you get it. I hear that there are a few people spending literally thousands of dollars a month on Midjourney generations. While I understand the magic of synthesizing imagery using the spirit of the Djinn, I can't even imagine wanting to pay that kind of money to access a model that I can't steer myself. I hope those poor people find salvation, and soon, because you can do so much better. Steer the boat! Really, despite being completely different architectures, there are similarities in finetuning diffusion models and language models. At the end of the day they're both just big computational graphs and you're trying to improve the quality of their output by having it learn from better/task-appropriate examples. In pre-training, scale rules all in both cases, but after that, data quality dominates, and after seeing that unfold a few times it's starting to seem to me that's all there is. No matter what kind of deep learning model, there is this quiet latent ability under the surface that cannot be accessed by simple conditioning without appropriate finetuning. And once your model is sufficiently well-trained to leverage that ability, quantitative metrics begin to mean less. Why is Puzzle Box v2-e13 qualitatively better than Puzzle Box v2-e10 -- in accuracy, in aesthetic quality, in output diversity, in recontexualizability, in inpainting, in outpainting, etc., it's just making better output and not even really close -- while it reports a higher loss on the validation set? I dunno. Why do quantitative benchmarks in language models get harder to interpret or compare and less informative as you train farther? I dunno that either, yet, but it's quite possibly the same general reason! The current paradigm of broad but midwit models and narrowly superhuman tools that anybody can make is absolutely the sweet spot for this kind of thing IMO, so we should enjoy it for whatever amount of time it exists. Should the massively-multimodal models capable of driving robot killcops roll out in a year or three, we might not get so much grace from our overlords (this is what many of the VC tools warning about "AI safety" are building or investing in now BTW, so it's easy to see where sincerity is in this space. We can match the models shortly enough -- there are no secrets in linear algebra, and systems engineering is only a little more tricky -- but the specialized robot hardware is more difficult. I continue whispering Voltaire's prayer -- "Lord, make my enemies ridiculous" -- it's worked thus far.) Anyway, enough nonsense, here are pretty pictures that my model made that I think could go on the refrigerator. I should be clear: this was Stable Diffusion 1.x, it's only been trained at 512px resolution -- only a quarter-megapixel, this is a small model! -- although many of these generations are bigger (by training non-square aspect ratios you can expand the size of your canvas quite a bit and still get good generations.) These were generated only with text prompts, no extra conditioning (you can often do even better by using the aesthetic embeddings, or the control networks, negative prompting, etc.) I give you the prompts used with the picture; I prefer mostly short and uncomplicated prompts -- leave 'vitamin words' and 'magic spells' and bizarre strings of styles or genres or artist names for weird generations that need them, a good model should give you pleasing results from straightforward conditioning -- although many of these (I think) are quite amusing. I should say that, besides the aesthetic quality (oh, it's okay) and the accuracy (it's doing all right), the diversity in output in this one pleases me; I'm not sure how all of those things got balanced in a U-Net with 850 million parameters, beyond just training through that long, long tail, but between the pretraining and all the tuners before me and my lunatic large-scale reinforcement learning and aesthetic preference projects it's seen the world and it knows how to press my buttons. What a beautiful thing. |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|